tmljob opened a new issue #1512: URL: https://github.com/apache/incubator-seatunnel/issues/1512

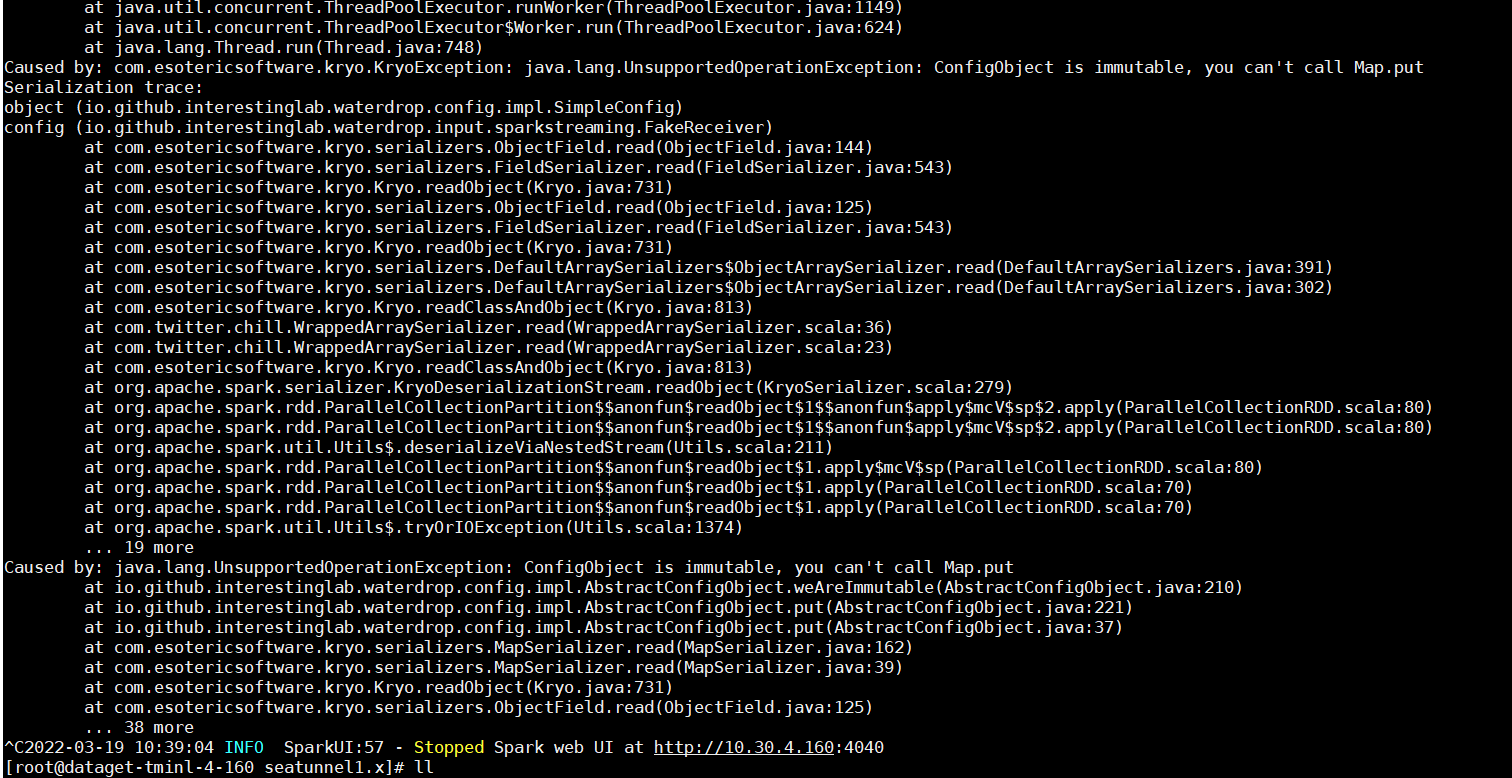

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened Validation using variable-substitution.conf.template produces the following exception. ### SeaTunnel Version 1.5.7 ### SeaTunnel Config ```conf # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. ###### ###### This config file is a demonstration of using variables substitution in seatunnel config ###### spark { # You can set spark configuration here # seatunnel defined streaming batch duration in seconds spark.streaming.batchDuration = 5 # see available properties defined by spark: https://spark.apache.org/docs/latest/configuration.html#available-properties spark.app.name = "seatunnel" spark.executor.instances = 2 spark.executor.cores = 1 spark.executor.memory = "1g" } vars { # dt is a variable set in this config file # you can take a reference of "dt" by ${vars->dt}, please don't use quote to surround ${vars->dt} dt = "20190318" } input { # This is a example input plugin **only for test and demonstrate the feature input plugin** fakestream { content = [ "20190318, beijing, first message", "20190319, shanghai, second message", "20190318, shanghai, third message" ] rate = 1 } # If you would like to get more information about how to configure seatunnel and see full list of input plugins, # please go to https://interestinglab.github.io/seatunnel-docs/#/zh-cn/v1/configuration/base } filter { split { fields = ["dt", "city", "msg"] delimiter = "," } # This is an example of variables substitution for sql in the sql filter. Actually, you can use variables substitution anywhere # city2 is a variable set in one of the following 3 ways (variables substitution order is 1 -> 2 -> 3): # 1. variable values in "vars" section in this config file, such as ${var->city1}, please see vars section in this file # 2. environment variables(e.g. export city2=shanghai) # 3. system properties(e.g. -Dcity2=shanghai) # You can take a reference of this "city2" variable by using ${city2}, please don't use quote. # You can use variables substitution in quoted string like this, "string part1"${city2}"string part2" sql { table_name = "user_view" sql = "select * from user_view where city = '"${city2}"'" } sql { table_name = "user_view" sql = "select * from user_view where dt = '"${vars->dt}"'" } # If you would like to get more information about how to configure seatunnel and see full list of filter plugins, # please go to https://interestinglab.github.io/seatunnel-docs/#/zh-cn/v1/configuration/base } output { stdout {} # If you would like to get more information about how to configure seatunnel and see full list of output plugins, # please go to https://interestinglab.github.io/seatunnel-docs/#/zh-cn/v1/configuration/base } ``` ``` ### Running Command ```shell ./bin/start-seatunnel.sh --master local --deploy-mode client --config ./config/variable-substitution.conf.template ``` ### Error Exception ```log 2022-03-18 18:25:49 ERROR ReceiverTracker:94 - Receiver has been stopped. Try to restart it. org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 96.0 failed 1 times, most recent failure: Lost task 0.0 in stage 96.0 (TID 96, localhost, executor driver): java.io.IOException: com.esotericsoftware.kryo.KryoException: java.lang.UnsupportedOperationException: ConfigObject is immutable, you can't call Map.put Serialization trace: object (io.github.interestinglab.waterdrop.config.impl.SimpleConfig) config (io.github.interestinglab.waterdrop.input.sparkstreaming.FakeReceiver) at org.apache.spark.util.Utils$.tryOrIOException(Utils.scala:1381) at org.apache.spark.rdd.ParallelCollectionPartition.readObject(ParallelCollectionRDD.scala:70) at sun.reflect.GeneratedMethodAccessor32.invoke(Unknown Source) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at java.io.ObjectStreamClass.invokeReadObject(ObjectStreamClass.java:1170) at java.io.ObjectInputStream.readSerialData(ObjectInputStream.java:2178) at java.io.ObjectInputStream.readOrdinaryObject(ObjectInputStream.java:2069) at java.io.ObjectInputStream.readObject0(ObjectInputStream.java:1573) at java.io.ObjectInputStream.defaultReadFields(ObjectInputStream.java:2287) at java.io.ObjectInputStream.readSerialData(ObjectInputStream.java:2211) at java.io.ObjectInputStream.readOrdinaryObject(ObjectInputStream.java:2069) at java.io.ObjectInputStream.readObject0(ObjectInputStream.java:1573) at java.io.ObjectInputStream.readObject(ObjectInputStream.java:431) at org.apache.spark.serializer.JavaDeserializationStream.readObject(JavaSerializer.scala:75) at org.apache.spark.serializer.JavaSerializerInstance.deserialize(JavaSerializer.scala:114) at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:375) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) Caused by: com.esotericsoftware.kryo.KryoException: java.lang.UnsupportedOperationException: ConfigObject is immutable, you can't call Map.put Serialization trace: object (io.github.interestinglab.waterdrop.config.impl.SimpleConfig) config (io.github.interestinglab.waterdrop.input.sparkstreaming.FakeReceiver) at com.esotericsoftware.kryo.serializers.ObjectField.read(ObjectField.java:144) at com.esotericsoftware.kryo.serializers.FieldSerializer.read(FieldSerializer.java:543) at com.esotericsoftware.kryo.Kryo.readObject(Kryo.java:731) at com.esotericsoftware.kryo.serializers.ObjectField.read(ObjectField.java:125) at com.esotericsoftware.kryo.serializers.FieldSerializer.read(FieldSerializer.java:543) at com.esotericsoftware.kryo.Kryo.readObject(Kryo.java:731) at com.esotericsoftware.kryo.serializers.DefaultArraySerializers$ObjectArraySerializer.read(DefaultArraySerializers.java:391) at com.esotericsoftware.kryo.serializers.DefaultArraySerializers$ObjectArraySerializer.read(DefaultArraySerializers.java:302) at com.esotericsoftware.kryo.Kryo.readClassAndObject(Kryo.java:813) at com.twitter.chill.WrappedArraySerializer.read(WrappedArraySerializer.scala:36) at com.twitter.chill.WrappedArraySerializer.read(WrappedArraySerializer.scala:23) at com.esotericsoftware.kryo.Kryo.readClassAndObject(Kryo.java:813) at org.apache.spark.serializer.KryoDeserializationStream.readObject(KryoSerializer.scala:279) at org.apache.spark.rdd.ParallelCollectionPartition$$anonfun$readObject$1$$anonfun$apply$mcV$sp$2.apply(ParallelCollectionRDD.scala:80) at org.apache.spark.rdd.ParallelCollectionPartition$$anonfun$readObject$1$$anonfun$apply$mcV$sp$2.apply(ParallelCollectionRDD.scala:80) at org.apache.spark.util.Utils$.deserializeViaNestedStream(Utils.scala:211) at org.apache.spark.rdd.ParallelCollectionPartition$$anonfun$readObject$1.apply$mcV$sp(ParallelCollectionRDD.scala:80) at org.apache.spark.rdd.ParallelCollectionPartition$$anonfun$readObject$1.apply(ParallelCollectionRDD.scala:70) at org.apache.spark.rdd.ParallelCollectionPartition$$anonfun$readObject$1.apply(ParallelCollectionRDD.scala:70) at org.apache.spark.util.Utils$.tryOrIOException(Utils.scala:1374) ... 19 more Caused by: java.lang.UnsupportedOperationException: ConfigObject is immutable, you can't call Map.put at io.github.interestinglab.waterdrop.config.impl.AbstractConfigObject.weAreImmutable(AbstractConfigObject.java:210) at io.github.interestinglab.waterdrop.config.impl.AbstractConfigObject.put(AbstractConfigObject.java:221) at io.github.interestinglab.waterdrop.config.impl.AbstractConfigObject.put(AbstractConfigObject.java:37) at com.esotericsoftware.kryo.serializers.MapSerializer.read(MapSerializer.java:162) at com.esotericsoftware.kryo.serializers.MapSerializer.read(MapSerializer.java:39) at com.esotericsoftware.kryo.Kryo.readObject(Kryo.java:731) at com.esotericsoftware.kryo.serializers.ObjectField.read(ObjectField.java:125) ... 38 more Driver stacktrace: at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1890) at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1878) at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1877) at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59) at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48) at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1877) at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:929) at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:929) at scala.Option.foreach(Option.scala:257) at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:929) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2111) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2060) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2049) at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49) Caused by: java.io.IOException: com.esotericsoftware.kryo.KryoException: java.lang.UnsupportedOperationException: ConfigObject is immutable, you can't call Map.put Serialization trace: ``` ``` ### Flink or Spark Version spark 2.4 ### Java or Scala Version java 1.8 scala 2.11 ### Screenshots  ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]