Tandoy opened a new issue #3471:

URL: https://github.com/apache/hudi/issues/3471

**Steps to reproduce the behavior:**

spark-submit --master yarn \

--driver-memory 1G \

--num-executors 2 \

--executor-memory 1G \

--executor-cores 4 \

--deploy-mode cluster \

--conf spark.yarn.executor.memoryOverhead=512 \

--conf spark.yarn.driver.memoryOverhead=512 \

--class org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer `ls

/home/appuser/tangzhi/hudi-0.8/hudi-release-0.8.0/packaging/hudi-utilities-bundle/target/hudi-utilities-bundle_2.11-0.8.0.jar`

\

--props

file:///opt/apps/hudi/hudi-utilities/src/test/resources/delta-streamer-config/kafka.properties

\

--schemaprovider-class

org.apache.hudi.utilities.schema.FilebasedSchemaProvider \

--source-class org.apache.hudi.utilities.sources.JsonKafkaSource \

--target-base-path

hdfs://dxbigdata101:8020/user/hudi/test/data/hudi_test_occ \

--op UPSERT \

--target-table hudi_test_occ \

--table-type COPY_ON_WRITE \

--source-ordering-field uid \

--source-limit 5000000

**Expected behavior:**

Use HoodieDeltaStreamer to ingest Kafka data

**Environment Description:**

Hudi version : 0.8

Spark version : 2.4.0.cloudera2

Hadoop version : 2.6.0-cdh5.13.3

Hive version : 1.1.0-cdh5.13.3

Storage (HDFS/S3/GCS..) : HDFS

Running on Docker? (yes/no) :no

**kafka.properties**

ihoodie.upsert.shuffle.parallelism=2

hoodie.insert.shuffle.parallelism=2

hoodie.bulkinsert.shuffle.parallelism=2

hoodie.datasource.write.recordkey.field=uid

hoodie.datasource.write.partitionpath.field=ts

hoodie.deltastreamer.schemaprovider.source.schema.file=hdfs://dxbigdata101:8020/user/hudi/test/data/schema.avsc

hoodie.deltastreamer.schemaprovider.target.schema.file=hdfs://dxbigdata101:8020/user/hudi/test/data/schema.avsc

hoodie.deltastreamer.source.kafka.topic=hudi_test_occ

group.id=occ

bootstrap.servers=dxbigdata103:9092

auto.offset.reset=earliest

hoodie.parquet.max.file.size=134217728

hoodie.datasource.write.keygenerator.class=org.apache.hudi.keygen.TimestampBasedKeyGenerator

hoodie.deltastreamer.keygen.timebased.timestamp.type=DATE_STRING

hoodie.deltastreamer.keygen.timebased.input.dateformat=yyyy-MM-dd HH:mm:ss

hoodie.deltastreamer.keygen.timebased.output.dateformat=yyyy/MM/dd

**schema:**

{

"type":"record",

"name":"gmall_event",

"fields":[{

"name": "area",

"type": "string"

}, {

"name": "uid",

"type": "long"

}, {

"name": "itemid",

"type": "string"

},{

"name": "npgid",

"type": "string"

},{

"name": "evid",

"type": "string"

},{

"name": "os",

"type": "string"

},{

"name": "pgid",

"type": "string"

},{

"name": "appid",

"type": "string"

},{

"name": "mid",

"type": "string"

}, {

"name": "type",

"type": "string"

}, {

"name": "ts",

"type":"string"

}

]}

**Additional context:**

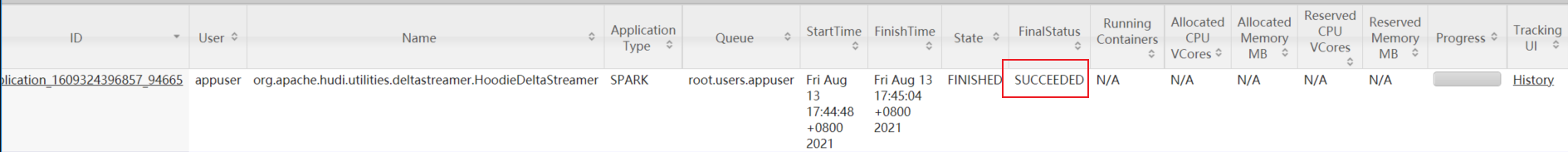

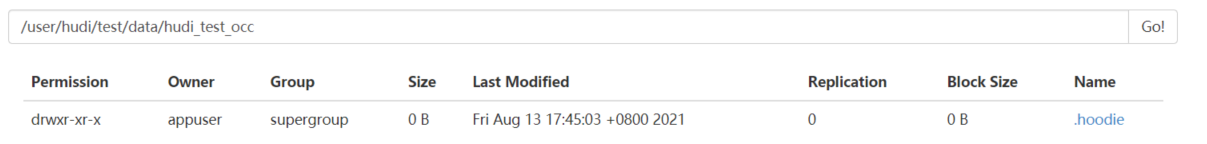

The Spark task did not report an error, but only the .hoodie directory.

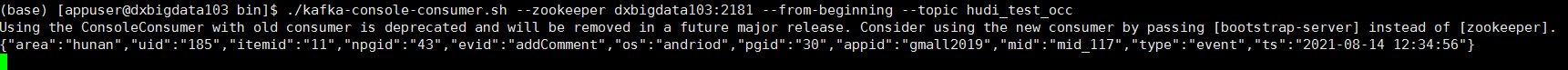

There is no data directory. But can use the shell to correctly consume topic

data

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]