soumilshah1995 commented on issue #8019:

URL: https://github.com/apache/hudi/issues/8019#issuecomment-1440395879

Hi @papablus

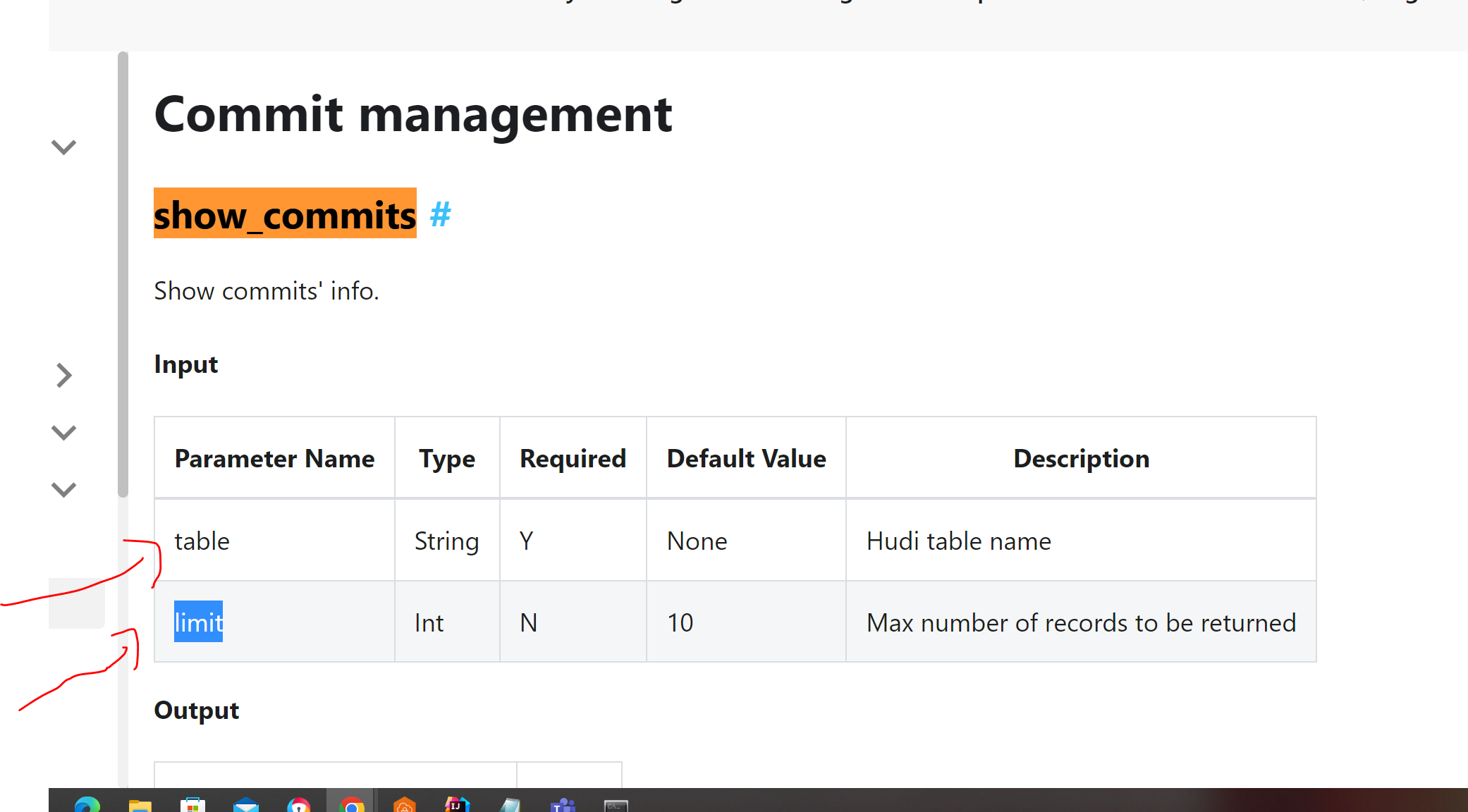

i tried show_commit and im getting following error can you please tell me if

there is something i am not doing right

```

try:

import sys

from awsglue.transforms import *

from awsglue.utils import getResolvedOptions

from pyspark.context import SparkContext

from awsglue.context import GlueContext

from awsglue.job import Job

from pyspark.sql.session import SparkSession

from awsglue.dynamicframe import DynamicFrame

from pyspark.sql.functions import col, to_timestamp,

monotonically_increasing_id, to_date, when

from pyspark.sql.functions import *

from awsglue.utils import getResolvedOptions

from awsglueml.transforms import EntityDetector

from pyspark.sql.types import StringType

from pyspark.sql.types import *

from datetime import datetime

import boto3

from functools import reduce

except Exception as e:

print("Error ")

spark = (SparkSession.builder

.config('spark.serializer',

'org.apache.spark.serializer.KryoSerializer')

.config('spark.sql.hive.convertMetastoreParquet', 'false')

.config('spark.sql.catalog.spark_catalog',

'org.apache.spark.sql.hudi.catalog.HoodieCatalog')

.config('spark.sql.extensions',

'org.apache.spark.sql.hudi.HoodieSparkSessionExtension')

.config('spark.sql.legacy.pathOptionBehavior.enabled',

'true').getOrCreate())

sc = spark.sparkContext

glueContext = GlueContext(sc)

job = Job(glueContext)

logger = glueContext.get_logger()

db_name = "hudidb"

table_name = "hudi_table"

recordkey = 'emp_id'

path = "s3://hudi-demos-emr-serverless-project-soumil/tmp/"

method = 'upsert'

table_type = "COPY_ON_WRITE"

precombine = "ts"

partiton_field = "date"

connection_options = {

"path": path,

"connectionName": "hudi-connection",

"hoodie.datasource.write.storage.type": table_type,

'hoodie.datasource.write.precombine.field': precombine,

'className': 'org.apache.hudi',

'hoodie.table.name': table_name,

'hoodie.datasource.write.recordkey.field': recordkey,

'hoodie.datasource.write.table.name': table_name,

'hoodie.datasource.write.operation': method,

'hoodie.datasource.hive_sync.enable': 'true',

"hoodie.datasource.hive_sync.mode": "hms",

'hoodie.datasource.hive_sync.sync_as_datasource': 'false',

'hoodie.datasource.hive_sync.database': db_name,

'hoodie.datasource.hive_sync.table': table_name,

'hoodie.datasource.hive_sync.use_jdbc': 'false',

'hoodie.datasource.hive_sync.partition_extractor_class':

'org.apache.hudi.hive.MultiPartKeysValueExtractor',

'hoodie.datasource.write.hive_style_partitioning': 'true',

}

df = spark. \

read. \

format("hudi"). \

load(path)

print("\n")

print(df.show(2))

print("\n")

try:

varSP = spark.sql("call show_commits('hudidb.hudi_table', 10)")

print(varSP.show())

except Exception as e:

print("Error 1", e)

try:

varSP = spark.sql("call show_commits('hudidb.hudi_table', '10')")

print(varSP.show())

except Exception as e:

print("Error 2", e)

```

### Error Message

```

<html>

<body>

<!--StartFragment-->

An error occurred while calling o90.sql.: java.lang.AssertionError:

assertion failed: It's not a Hudi table at

scala.Predef$.assert(Predef.scala:219) at

org.apache.spark.sql.catalyst.catalog.HoodieCatalogTable.<init>(HoodieCatalogTable.scala:51)

at

org.apache.spark.sql.catalyst.catalog.HoodieCatalogTable$.apply(HoodieCatalogTable.scala:367)

at

org.apache.spark.sql.catalyst.catalog.HoodieCatalogTable$.apply(HoodieCatalogTable.scala:363)

at

org.apache.hudi.HoodieCLIUtils$.getHoodieCatalogTable(HoodieCLIUtils.scala:70)

at

org.apache.spark.sql.hudi.command.procedures.ShowCommitsProcedure.call(ShowCommitsProcedure.scala:82)

at

org.apache.spark.sql.hudi.command.CallProcedureHoodieCommand.run(CallProcedureHoodieCommand.scala:33)

at

org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:75)

at

org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:73)

at org.apache.spark.sql.execution.command.ExecutedComman

dExec.executeColle

--

ct(commands.scala:84) at

org.apache.spark.sql.execution.QueryExecution$anonfun$eagerlyExecuteCommands$1.$anonfun$applyOrElse$1(QueryExecution.scala:103)

at

org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:107)

at

org.apache.spark.sql.execution.SQLExecution$.withTracker(SQLExecution.scala:224)

at

org.apache.spark.sql.execution.SQLExecution$.executeQuery$1(SQLExecution.scala:114)

at

org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$7(SQLExecution.scala:139)

at

org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:107)

at

org.apache.spark.sql.execution.SQLExecution$.withTracker(SQLExecution.scala:224)

at

org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$6(SQLExecution.scala:139)

at

org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:245)

at

org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.

scala:138) at org

.apache.spark.sql.SparkSession.withActive(SparkSession.scala:779) at

org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:68)

at

org.apache.spark.sql.execution.QueryExecution$anonfun$eagerlyExecuteCommands$1.applyOrElse(QueryExecution.scala:100)

at

org.apache.spark.sql.execution.QueryExecution$anonfun$eagerlyExecuteCommands$1.applyOrElse(QueryExecution.scala:96)

at

org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformDownWithPruning$1(TreeNode.scala:615)

at

org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:177)

at

org.apache.spark.sql.catalyst.trees.TreeNode.transformDownWithPruning(TreeNode.scala:615)

at

org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.org$apache$spark$sql$catalyst$plans$logical$AnalysisHelper$super$transformDownWithPruning(LogicalPlan.scala:30)

at

org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning(AnalysisHelper.scala:267)

at org.apache.spark.sql.catalyst.p

lans.logical.Anal

ysisHelper.transformDownWithPruning$(AnalysisHelper.scala:263) at

org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at

org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at

org.apache.spark.sql.catalyst.trees.TreeNode.transformDown(TreeNode.scala:591)

at

org.apache.spark.sql.execution.QueryExecution.eagerlyExecuteCommands(QueryExecution.scala:96)

at

org.apache.spark.sql.execution.QueryExecution.commandExecuted$lzycompute(QueryExecution.scala:83)

at

org.apache.spark.sql.execution.QueryExecution.commandExecuted(QueryExecution.scala:81)

at org.apache.spark.sql.Dataset.<init>(Dataset.scala:222) at

org.apache.spark.sql.Dataset$.$anonfun$ofRows$2(Dataset.scala:102) at

org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:779) at

org.apache.spark.sql.Dataset$.ofRows(Dataset.scala:99) at

org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:622) at

org.apache.sp

ark.sql.SparkSess

ion.withActive(SparkSession.scala:779) at

org.apache.spark.sql.SparkSession.sql(SparkSession.scala:617) at

sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at

sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at

sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498) at

py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244) at

py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357) at

py4j.Gateway.invoke(Gateway.java:282) at

py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132) at

py4j.commands.CallCommand.execute(CallCommand.java:79) at

py4j.ClientServerConnection.waitForCommands(ClientServerConnection.java:182) at

py4j.ClientServerConnection.run(ClientServerConnection.java:106) at

java.lang.Thread.run(Thread.java:750)

<!--EndFragment-->

</body>

</html>

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]